|

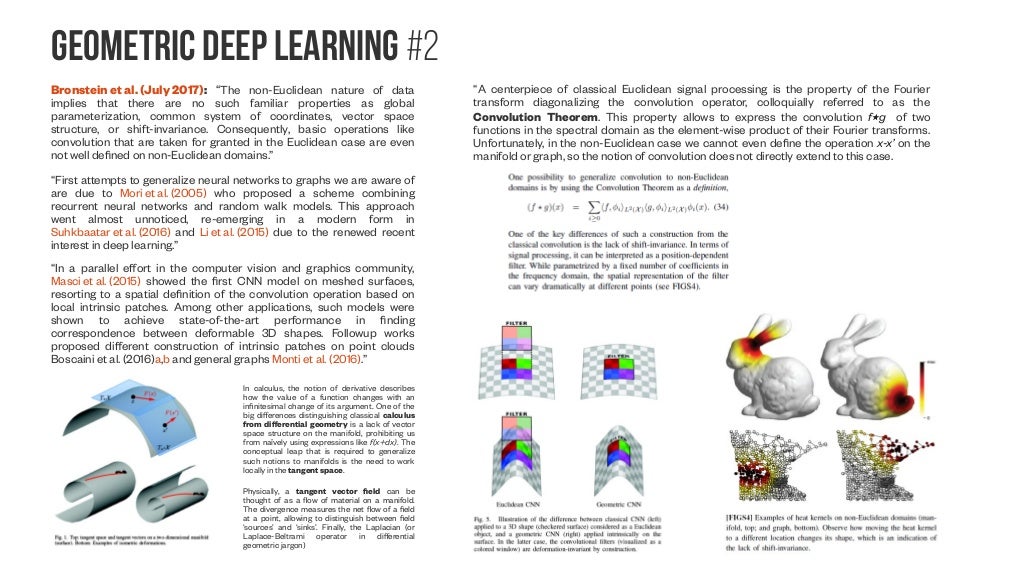

In GCN, the convolution operation is defined in the spectral domain as: One popular spectral-based GCNN architecture is the Graph Convolutional Network (GCN), proposed by Kipf and Welling in 2017. In addition, its eigenvalues and eigenvectors encode important spectral properties of the graph. The graph Laplacian is a matrix that captures the connectivity and structure of the graph. The main idea behind spectral-based methods is to use the eigenvalues and eigenvectors of the graph Laplacian to define the convolution operation. These methods leverage the spectral properties of the graph Laplacian to define the convolution operation, which makes them particularly suitable for handling graph signals with complex structures. The roots of this construction are in the signal processing and computational harmonic analysis communities, where dealing with non-Euclidean signals has become prominent in the late 2000s and early 2010s.” Bruna Spectral-based methods for Graph Convolutional Neural Networks (GCNNs) are a family of algorithms that perform convolutions on the spectral domain of the graph Laplacian. Spectral, according to Professor Bronstein “emerged from the work of Joan Bruna and coauthors using the notion of the Graph Fourier transform. Spectral-based methods use the eigenvalues and eigenvectors of the graph Laplacian to perform convolution operations. There are several architectures for GCNNs, including spectral-based and spatial-based methods. The key is to design a convolution operation that respects the underlying graph structure and is translation-invariant. GCNNs aim to learn localized features by propagating information from neighboring nodes in a graph using message passing algorithms. One of the key challenges in GDL is designing graph convolutional neural networks (GCNNs) that can effectively process graphs of varying sizes and structures. In GDL, the convolution operation becomes generalized to operate on irregular graphs where the nodes have arbitrary connections. Where each pixel is connected to its neighboring pixels in a fixed pattern. In traditional CNNs, the convolution operation becomes performed on a grid of pixels. The core idea behind GDL is to generalize convolutional neural networks (CNNs), which are highly effective in processing grid-like data such as images, to non-Euclidean domains such as graphs and manifolds. GDL has shown great promise in various fields such as computer vision, drug discovery, physics, and social network analysis. In traditional deep learning, the data is represented as a grid of pixels or a vector of features, but in GDL, the data is represented as a graph, which is a set of nodes (vertices) and edges that connect them.

It provides a common blueprint allowing to derive from first principles neural network architectures as diverse as CNNs, GNNs, and Transformers.” Geometric Deep Learning (GDL) is a subfield of machine learning that focuses on developing deep learning models for structured data with geometric structures such as graphs, point clouds, meshes, and manifolds. “Geometric Deep Learning is an umbrella term for approaches considering a broad class of ML problems from the perspectives of symmetry and invariance. Or as Oxford University DeepMind Professor Michael Bronstein puts it: GDL is an umbrella term encompassing emerging techniques that generalize neural networks to Euclidean and non-Euclidean domains, such as graphs, manifolds, meshes or string representations. Geometric deep learning (GDL) is an emerging concept of Artificial Intelligence.

What is geometric deep learning? Science / Artificial Intelligence & Machine Learning LRI is grounded by the information bottleneck principle, and thus LRI-induced models are also more robust to distribution shifts between training and test scenarios. Compared with previous post-hoc interpretation methods, the points detected by LRI align much better and stabler with the ground-truth patterns that have actual scientific meanings. We also propose four datasets from real scientific applications that cover the domains of high-energy physics and biochemistry to evaluate the LRI mechanism. LRI-induced models, once trained, can detect the points in the point cloud data that carry information indicative of the prediction label. This work proposes a general mechanism, learnable randomness injection (LRI), which allows building inherently interpretable models based on general GDL backbones. However, GDL models are often complicated and hardly interpretable, which poses concerns to scientists who are to deploy these models in scientific analysis and experiments.

Recently, geometric deep learning (GDL) has been widely applied to solve prediction tasks with such data. Abstract: Point cloud data is ubiquitous in scientific fields.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed